UMass Amherst-Led Team Challenges Claims About Facebook’s Algorithm and Election Misinformation

An interdisciplinary team of researchers led by the University of Massachusetts Amherst recently published work in the prestigious journal Science calling into question the conclusions of a widely reported study — published in Science in 2023 and funded by Meta — finding the social platform’s algorithms successfully filtered out untrustworthy news surrounding the 2020 election and were not major drivers of misinformation.

The UMass Amherst-led team’s work shows that the Meta-funded research was conducted during a short period when Meta temporarily introduced a new, more rigorous news algorithm rather than its standard one, and that the previous researchers did not account for the algorithmic change. This helped to create the misperception, widely reported by the media, that Facebook and Instagram’s news feeds are largely reliable sources of trustworthy news.

“The first thing that rang alarm bells for us” says lead author Chhandak Bagchi, a graduate student in the Manning College of Information and Computer Science at UMass Amherst, “was when we realized that the previous researchers,” Guess et al., “conducted a randomized control experiment during the same time that Facebook had made a systemic, short-term change to their news algorithm.”

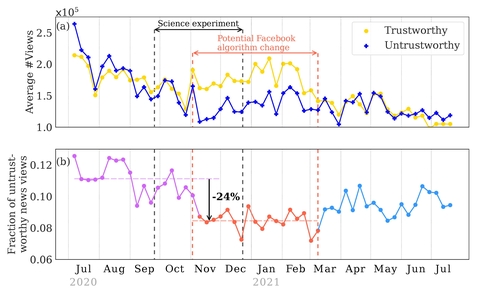

Beginning around the start of November 2020, Meta introduced 63 “break glass” changes to Facebook’s news feed which were expressly designed to diminish the visibility of untrustworthy news surrounding the 2020 U.S. presidential election. These changes were successful. “We applaud Facebook for implementing the more stringent news feed algorithm,” says Przemek Grabowicz, the paper’s senior author, who recently joined University College Dublin but conducted this research at UMass Amherst’s Manning College of Information and Computer Science. Chhandak, Grabowicz and their co-authors point out that the newer algorithm cut user views of misinformation by at least 24%. However, the changes were temporary, and the news algorithm reverted to its previous practice of promoting a higher fraction of untrustworthy news in March 2021.

Guess et al.’s study ran from September 24 through December 23, and so substantially overlapped with the short window when Facebook’s news was determined by the more stringent algorithm — but the Guess et al. paper did not clarify that their data captured an exceptional moment for the social media platform. “Their paper gives the impression that the standard Facebook algorithm is good at stopping misinformation,” says Grabowicz, “which is questionable.”

Part of the problem, as Chhandak, Grabowicz, and their co-authors write, is that experiments, such as the one run by Guess et al., have to be “preregistered” — which means that Meta could have known well ahead of time what the researchers would be looking for. And yet, social media are not required to make any public notification of significant changes to their algorithms. “This can lead to situations where social media companies could conceivably change their algorithms to improve their public image if they know they are being studied,” write the authors, which include Jennifer Lundquist (professor of sociology at UMass Amherst), Monideepa Tarafdar (Charles J. Dockendorff Endowed Professor at UMass Amherst’s Isenberg School of Management), Anthony Paik (professor of sociology at UMass Amherst) and Filippo Menczer (Luddy Distinguished Professor of Informatics and Computer Science at Indiana University).

Though Meta funded and supplied 12 co-authors for Guess et al.’s study, they write that “Meta did not have the right to prepublication approval.”

“Our results show that social media companies can mitigate the spread of misinformation by modifying their algorithms but may not have financial incentives to do so,” says Paik. “A key question is whether the harms of misinformation — to individuals, the public and democracy — should be more central in their business decisions.”