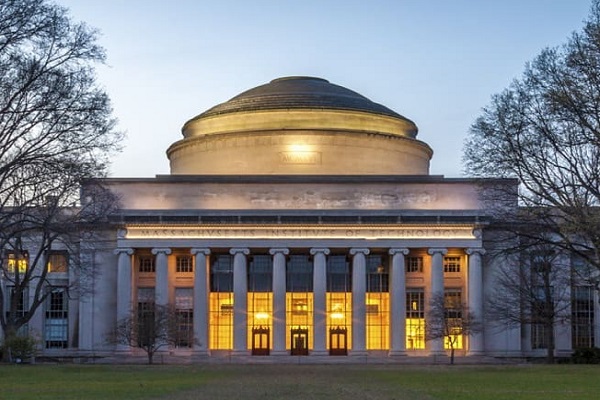

Massachusetts Institute of Technology: Looking forward to forecast the risks of a changing climate

Extreme weather events that were once considered rare have become noticeably less so, from intensifying hurricane activity in the North Atlantic to wildfires generating massive clouds of ozone-damaging smoke. But current climate models are unprepared when it comes to estimating the risk that these increasingly extreme events pose — and without adequate modeling, governments are left unable to take necessary precautions to protect their communities.

MIT Department of Earth, Atmospheric and Planetary Science (EAPS) Professor Paul O’Gorman researches this trend by studying how climate affects the atmosphere and incorporating what he learns into climate models to improve their accuracy. One particular focus for O’Gorman has been changes in extreme precipitation and midlatitude storms that hit areas like New England.

“These extreme events are having a lot of impact, but they’re also difficult to model or study,” he says. Seeing the pressing need for better climate models that can be used to develop preparedness plans and climate change mitigation strategies, O’Gorman and collaborators Kerry Emanuel, the Cecil and Ida Green Professor of Atmospheric Science in EAPS, and Miho Mazereeuw, associate professor in MIT’s Department of Architecture, are leading an interdisciplinary group of scientists, engineers, and designers to tackle this problem with their MIT Climate Grand Challenges flagship project, “Preparing for a new world of weather and climate extremes.”

“We know already from observations and from climate model predictions that weather and climate extremes are changing and will change more,” O’Gorman says. “The grand challenge is preparing for those changing extremes.”

Their proposal is one of five flagship projects recently announced by the MIT Climate Grand Challenges initiative — an Institute-wide effort catalyzing novel research and engineering innovations to address the climate crisis. Selected from a field of almost 100 submissions, the team will receive additional funding and exposure to help accelerate and scale their project goals. Other MIT collaborators on the proposal include researchers from the School of Engineering, the School of Architecture and Planning, the Office of Sustainability, the Center for Global Change Science, and the Institute for Data, Systems and Society.

Weather risk modeling

Fifteen years ago, Kerry Emanuel developed a simple hurricane model. It was based on physics equations, rather than statistics, and could run in real time, making it useful for modeling risk assessment. Emanuel wondered if similar models could be used for long-term risk assessment of other things, such as changes in extreme weather because of climate change.

“I discovered, somewhat to my surprise and dismay, that almost all extant estimates of long-term weather risks in the United States are based not on physical models, but on historical statistics of the hazards,” says Emanuel. “The problem with relying on historical records is that they’re too short; while they can help estimate common events, they don’t contain enough information to make predictions for more rare events.”

Another limitation of weather risk models which rely heavily on statistics: They have a built-in assumption that the climate is static.

“Historical records rely on the climate at the time they were recorded; they can’t say anything about how hurricanes grow in a warmer climate,” says Emanuel. The models rely on fixed relationships between events; they assume that hurricane activity will stay the same, even while science is showing that warmer temperatures will most likely push typical hurricane activity beyond the tropics and into a much wider band of latitudes.

As a flagship project, the goal is to eliminate this reliance on the historical record by emphasizing physical principles (e.g., the laws of thermodynamics and fluid mechanics) in next-generation models. The downside to this is that there are many variables that have to be included. Not only are there planetary-scale systems to consider, such as the global circulation of the atmosphere, but there are also small-scale, extremely localized events, like thunderstorms, that influence predictive outcomes.

Trying to compute all of these at once is costly and time-consuming — and the results often can’t tell you the risk in a specific location. But there is a way to correct for this: “What’s done is to use a global model, and then use a method called downscaling, which tries to infer what would happen on very small scales that aren’t properly resolved by the global model,” explains O’Gorman. The team hopes to improve downscaling techniques so that they can be used to calculate the risk of very rare but impactful weather events.

Global climate models, or general circulation models (GCMs), Emanuel explains, are constructed a bit like a jungle gym. Like the playground bars, the Earth is sectioned in an interconnected three-dimensional framework — only it’s divided 100 to 200 square kilometers at a time. Each node comprises a set of computations for characteristics like wind, rainfall, atmospheric pressure, and temperature within its bounds; the outputs of each node are connected to its neighbor. This framework is useful for creating a big picture idea of Earth’s climate system, but if you tried to zoom in on a specific location — like, say, to see what’s happening in Miami or Mumbai — the connecting nodes are too far apart to make predictions on anything specific to those areas.

Scientists work around this problem by using downscaling. They use the same blueprint of the jungle gym, but within the nodes they weave a mesh of smaller features, incorporating equations for things like topography and vegetation or regional meteorological models to fill in the blanks. By creating a finer mesh over smaller areas they can predict local effects without needing to run the entire global model.

Of course, even this finer-resolution solution has its trade-offs. While we might be able to gain a clearer picture of what’s happening in a specific region by nesting models within models, it can still make for a computing challenge to crunch all that data at once, with the trade-off being expense and time, or predictions that are limited to shorter windows of duration — where GCMs can be run considering decades or centuries, a particularly complex local model may be restricted to predictions on timescales of just a few years at a time.

“I’m afraid that most of the downscaling at present is brute force, but I think there’s room to do it in better ways,” says Emanuel, who sees the problem of finding new and novel methods of achieving this goal as an intellectual challenge. “I hope that through the Grand Challenges project we might be able to get students, postdocs, and others interested in doing this in a very creative way.”

Adapting to weather extremes for cities and renewable energy

Improving climate modeling is more than a scientific exercise in creativity, however. There’s a very real application for models that can accurately forecast risk in localized regions.

Another problem is that progress in climate modeling has not kept up with the need for climate mitigation plans, especially in some of the most vulnerable communities around the globe.

“It is critical for stakeholders to have access to this data for their own decision-making process. Every community is composed of a diverse population with diverse needs, and each locality is affected by extreme weather events in unique ways,” says Mazereeuw, the director of the MIT Urban Risk Lab.

A key piece of the team’s project is building on partnerships the Urban Risk Lab has developed with several cities to test their models once they have a usable product up and running. The cities were selected based on their vulnerability to increasing extreme weather events, such as tropical cyclones in Broward County, Florida, and Toa Baja, Puerto Rico, and extratropical storms in Boston, Massachusetts, and Cape Town, South Africa.

In their proposal, the team outlines a variety of deliverables that the cities can ultimately use in their climate change preparations, with ideas such as online interactive platforms and workshops with stakeholders — such as local governments, developers, nonprofits, and residents — to learn directly what specific tools they need for their local communities. By doing so, they can craft plans addressing different scenarios in their region, involving events such as sea-level rise or heat waves, while also providing information and means of developing adaptation strategies for infrastructure under these conditions that will be the most effective and efficient for them.

“We are acutely aware of the inequity of resources both in mitigating impacts and recovering from disasters. Working with diverse communities through workshops allows us to engage a lot of people, listen, discuss, and collaboratively design solutions,” says Mazereeuw.

By the end of five years, the team is hoping that they’ll have better risk assessment and preparedness tool kits, not just for the cities that they’re partnering with, but for others as well.

“MIT is well-positioned to make progress in this area,” says O’Gorman, “and I think it’s an important problem where we can make a difference.”